Few days ago I had to write an algorithm for finding fastest route between two points on an Open Street Map.

One of the most popular routing algorithms is Dijkstra’s Algorithm. To perform route search we need a data representation of Vertices and Edges connecting those vertices. It is also important to have Cost assigned to edges. If Edge is bidirectional we also need to have Reverse Cost – so we know how expensive is to return back using that same edge.

The algorithm itself uses that information for finding shortest path between provided vertices.

Here is description from Wikipedia

Dijkstra's algorithm. It picks the unvisited vertex with the lowest-distance, calculates the distance through it to each unvisited neighbor, and updates the neighbor's distance if smaller. Mark visited (set to red) when done with neighbors.Here is another

Illustration of Dijkstra's algorithm search for finding path from a start node (lower left, red) to a goal node (upper right, green) in a robot motion planning problem. Open nodes represent the "tentative" set. Filled nodes are visited ones, with color representing the distance: the greener, the farther. Nodes in all the different directions are explored uniformly, appearing as a more-or-less circular wavefront as Dijkstra's algorithm uses a heuristic identically equal to 0.So basically what we need to do is:

- Go from each vertex (starting with source vertex) to their neighbours. Important is we always must pick vertex with smallest distance first on each iteration.

- Update each vertex with smallest distance (each vertex may be visited through many other vertices so there are many distances per vertex – but we are interested in shortest one)

- Also as we update vertex with smallest distance we must store the source vertex from which we came here so later we can reconstruct the path

- Once we reach target vertex – that means we have found shortest path.

Vertex:

Each vertex has reference to Previous vertex – the source from where during path search current vertex was visited and the cost was shortest

Also each vertex has reference to all neighbour vertices – this is pre-populated before search starts so the search process is as efficient as possible.

Visited flag is set to true when we marked with distances all neighbours of a vertex. If vertex is already visited then it’s neighbours will not be iterated anymore.

Cost – is total distance to the vertex from source vertex.

As you already might noticed there are two types of properties on the class – one is pre-populated and reused between many searches, and the other needs initialization before each search.

Here ID, Neighbours are pre-populated properties – once we initialize the all vertices we can reuse those values between searches.

Other properties need initialization for each vertex in the graph before each search

- Visited needs to be set to false for all vertices

- Cost needs to be set to max value

- PreviousVertex must be set to null

- PreviousEdge must be set to null

SourceVertex and TargetVertex are references to Vertex objects that represent start and end point of an edge

Here Cost does not change from search to search – because this Cost differs from vertex Cost. Vertex Cost updates during search and is distance to the vertex from source, while Cost in edge is distance between start vertex to end vertex.

IsReverse also does not change – this property indicates whether this edge was created using ReverseCost of original edge from database (To avoid confusion I will explain here that database will contain bidirectional edges with values cost and reverse cost showing how expensive is to move from start vertex to end vertex and vice-versa. During search however we need to double edges – the search graph must have 2 edges for each bidirectional DB edge – one with unchanged Source and Target Vertex properties and other with swapped vertices.)

I can say the Edge class properties does not change during search. Once initialized the Edge objects can be reused between many searches

To pass data to graph we need some POCO object representing minimum amount of properties enough for path search.

For simplicity I use the same POCO object to return result from graph search. So there some properties specific to result.

The GraphDataItem class:

Properties used to pass data inside search are:

EdgeID, SourceVertexID, TargetVertexID, Cost, ReverseCost

As you see Clone methods used exactly that set of properties. when returning result from graph I clone the item excluding result-specific properties and I set those in result method without modifying reusable edge objects.

To separate result construction from search algorithm I created dedicated class returning IEnumerable<GraphDataItem> and accepting found target vertex, and some informational properties (time spent to search and tries made before finding target).

Here is GraphSearchResult class:

As you see the result is constructed right in the constructor. the passed vertex is walked to the source using the PreviousVertex reference. PreviousEdge is used to retrieve the original data item and return it too.

So what we have – we can populate GraphDataItem objects collection and pass it to graph, graph will create cache for fast search based on the data we passed in and reuse that cache between many searches. graph will then need to find path is we provide path between which vertices we need and return is using GraphSearchResult helper.

Here is the Graph class:

Graph class has class level variables for in memory representation of all vertices and edges and their interconnection. mEdges and mVertices variables store that schema (keys are IDs of the items stored in values).

Also Graph operates with two types of Vertex ID – original ones provided and in-memory ones – which are sequential for algorithm realization simplicity. mVertexDictionary variable stores the mapping between original and algorithm-related IDs.

The algorithm you see here actually experienced some evolution during development.

To explain the changes and importance of the changes I will return again to very important (critical I would say) point in this algorithm.

I test this algorithm using real data which I obtained by downloading whole country (Georgia – Caucasus) OSM map and processing it using osm2pgrouting which created the SQL script with vertices and edges info. I then imported the script into pgSQL server and after few data conversions was able to import into MS SQL Server, which is desired server for our purpose.

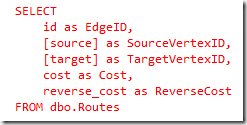

In essence the data required for routing is inside one table named Routes. It looks like this:

I think I will need to write a tool which generates routing table from OSM and inserts it directly to SQL Server. This should be perfect subject for next blog post.

Here we can use source, target, cost, reverse_cost, id as source vertex id, target vertex id, cost, reverse cost, and edge id properties. we can pass this inside our graph class and it will do the job.

To return to the evolution of the algorithm I want to emphasize the size of this routing table.

It has 180 000 + rows representing smallest road segments in the country.

With first version of algorithm I was not able to find path at all – the system was doing very slow iterations and just hang

Second version of algorithm needed about 2-5 seconds to find path between two farthest points in the country.

After optimizations third version needs about 0.1 second on my PC for path between two farthest points in the country.

Please note that we have 180 000 bidirectional edges and after creating in – memory graph we actually have more connections between vertices that in DB table.

Basically the search of nodes is iteration over vertices and their neighbours. If we also remember that one vertex is visited only once we can assume that not much problem should appear if we iterate even over hundred million records.

BUT the problem IS – in each iteration we must choose next neighbour to visit. And this is crucial part of algorithm. The next neighbour must have the smallest current distance from the source. And this unfortunately means we need to perform SORT once per iteration. This is extremely slow operation if we consider it will be performed hundred thousand times.

First version

In first version I used mVertices.Values.SortBy(vertex => vertex.Cost).FirstOrDefault() to get next vertex to visit.

as you know mVertices has about 180 000 items so the application just died.

Second version

In second version I logically excluded from sort vertices that has max value distances.

This is done my maintaining enumerated (estimated) neighbour vertices having non max value distance in separate collection (mNextVertices)

then I just used this code to get next vertex to visit: mNextVertices.Values.SortBy(vertex => vertex.Cost).FirstOrDefault()

This was a huge step forward and I was able to search large paths in 2-5 seconds

Third version

The final version is what you see in this blog post now.

I decided that inserting into SortedList should be less expensive operation than sorting unsorted collection.

In second version I was inserting items into unsorted dictionary – and that operation was fast, but during the sort a lot of CPU time was lost.

In this version I insert into SortedList each iteration but I dont need to sort because SortedList is always sorted – I just can take first item)

The result was huge – I need just a fraction of a second to go through very large collection – it is about 0.1-0.2 seconds per largest searches in a country.

Creating CLR Stored Procedure for routing.

After I finished testing the algorithm I decided it will be nice to put this functionality into SQL Server SP. We have all data there – no need to move huge data collections between servers.

There are two potential ways to use the algorithm in procedure

- Mark it as UNSAFE and let is cache initialized in-memory graph in static variable. Each search will be fast and reuse the graph. If you go that path please use lock to ensure only one thread search at one given moment. Graph objects are modified during search and one graph object cannot search in parallel.

- Mark is as SAFE and don’t use static variable – just initialize graph once per search.This way we don’t need to lock access – for each call we create new graph.

I created database project and added CLR SP

The SP code itself is simple

The SP will accept:

- select statement – string which is the select SQL statement returning the below columns for whole graph

- EdgeID

- SourceVertexID

- TargetVertexID

- Cost

- ReverseCost

- source vertex ID

- target vertex ID

But the main point here is – we can change SQL later without rebuilding the SP – we can change for example cost criteria and return distance to find shortest path but not fastest etc.

I modified Graph class a little so it can use the SQL statement to fetch the data

Here is added factory method

And that is all actually.

After that I created helper function which returns vertex ID from our routes table by provided Lat/Long pair.

When this is done the SQL wrapper SP for search looks like this:

To test is we simply can run this query:

To demonstrate the usage I created simple maps host application:

After you run it you will see this:

You can make very large path search inside the country:

I will provide a short description of how to run the sample.

First – ge the code here: https://github.com/kirill-chilingarashvili/dijkstra-map-routing-demo

Second – open solution file in Visual Studio 2012

Third (important) – set database project as startup one

Run

Important:

The database project is set up to create database named “Routing” to localhost – default instance of SQL server. Please make sure you don’t have already database with this name – please read this carefully as I am not responsible for potential damage you can make on your machine.

Next - if deployment succeeded make sample project as startup one

Now run the application.

When you first run it – the routing data will be imported into DB (localhost/Routing again)

This can take a minute or two but this is one-time operation – next time you run the app this will not need to be done again.

That’s all – you can now use app and see Dijkstra’s algorithm implementation in action :)

Happy coding :)

P.S. Well only after three years I understood that there is even better structure than SortedList that can improve performance.

You should use Priority Queue structure which will give better results.

for Sorted List insert is O(n) and accessing an item O(1)

And for Priority Queue insert is O(log(n)) and retrieval is O(log(n)) which is a big deal for large number of nodes.